MCP stands for model context protocol, an open standard that gives AI models a single, consistent way to connect to external tools, databases, and services. Without MCP, developers have to build a separate integration every time they want an AI agent to read a file, query an API, or run a code action. With MCP, a single protocol handles all of those connections, so AI agents can work across tools without custom wiring for each one. Anthropic introduced MCP in November 2024, and the standard has since been adopted across major AI platforms including OpenAI, Google DeepMind, and dozens of developer tools.

Key takeaways:

- MCP (model context protocol) is an open standard that connects AI models to external tools, data sources, and services

- MCP replaces one-off API integrations with a universal protocol any AI agent can use

- An MCP server exposes tools and data; an MCP client (your AI agent or IDE) calls those tools at runtime

- MCP works across AI models, not just Claude, including GPT-4o, Gemini, and open-source models

- Developers use MCP to build agentic AI workflows that act on real data without manual integrations per tool

What does MCP stand for in AI?

.png)

MCP stands for model context protocol. The name describes exactly what it does: it is a protocol (a shared set of rules) that governs how context (tools, data, instructions) gets delivered to AI models at runtime.

Before MCP, an AI assistant that needed to read a Google Doc, query a database, and then post to Slack required three separate custom integrations. Developers wrote glue code for each connection, maintained it as APIs changed, and rebuilt it for every new AI model they adopted. MCP replaces that pattern with a single standard. Build an MCP server for your tool once, and any MCP-compatible AI client can connect to it.

The protocol was created by Anthropic and open-sourced in November 2024. It follows a client-server architecture inspired by the language server protocol (LSP) that code editors use to support multiple programming languages without rebuilding the editor for each one.

How MCP works: clients, servers, and the host

MCP has three moving parts: the host, the client, and the server.

The host is the application running the AI model. This could be Claude Desktop, Cursor, Windsurf, or any custom AI agent runtime. The host manages connections and enforces permissions.

The MCP client lives inside the host. It opens a connection to one or more MCP servers and sends requests on the model's behalf. When the AI model decides it needs to query a database or read a file, the client is what actually makes that call.

The MCP server is a lightweight process that exposes specific capabilities. A file system MCP server, for example, exposes tools like read_file and write_file. A GitHub MCP server exposes tools like list_pull_requests or create_issue. The server responds to client requests and returns structured results the AI model can reason over.

The communication between client and server happens over two transport methods: stdio (for local processes) and Streamable HTTP (for remote servers). Both options are defined in the MCP specification, so any compliant client and server can talk to each other regardless of how they were built.

What MCP servers actually do

An MCP server exposes three types of capabilities: tools, resources, and prompts.

Tools are executable functions the AI model can call. A web search tool, a database query tool, a send-email tool. These are the actions the agent takes.

Resources are read-only data the model can access. A file, a database record, a web page. Resources give the model context it can reason over without executing an action.

Prompts are reusable instruction templates the server can provide. These help standardize how AI agents interact with a given tool or workflow.

This three-part structure is what makes MCP different from a simple API wrapper. MCP servers are designed to be AI-native: they describe their capabilities in a format that AI models can discover and use dynamically, not just developers who read documentation.

MCP in agentic AI: why it matters for autonomous workflows

Agentic AI refers to AI systems that take multi-step actions autonomously, rather than just answering a single question. An agent that can browse the web, write code, run tests, and open a pull request is doing agentic work. MCP is the infrastructure layer that makes this possible in production.

Before MCP, agent frameworks like LangChain, CrewAI, and AutoGen each built their own tool-integration patterns. A tool built for one framework would not work in another without a rewrite. MCP provides a common layer so tools are portable across frameworks and models. A Notion MCP server built today works with Claude, GPT-4o, and any other MCP-compatible model without modification.

This portability has real consequences for teams building AI agents. You can invest in building or sourcing MCP servers for your core business tools (CRM, project management, code repositories, analytics) and reuse them across every agent you build, regardless of which model you choose. Choosing the right cloud hosting platform to deploy those servers ensures they stay scalable, reliable, and portable across environments as your stack evolves.

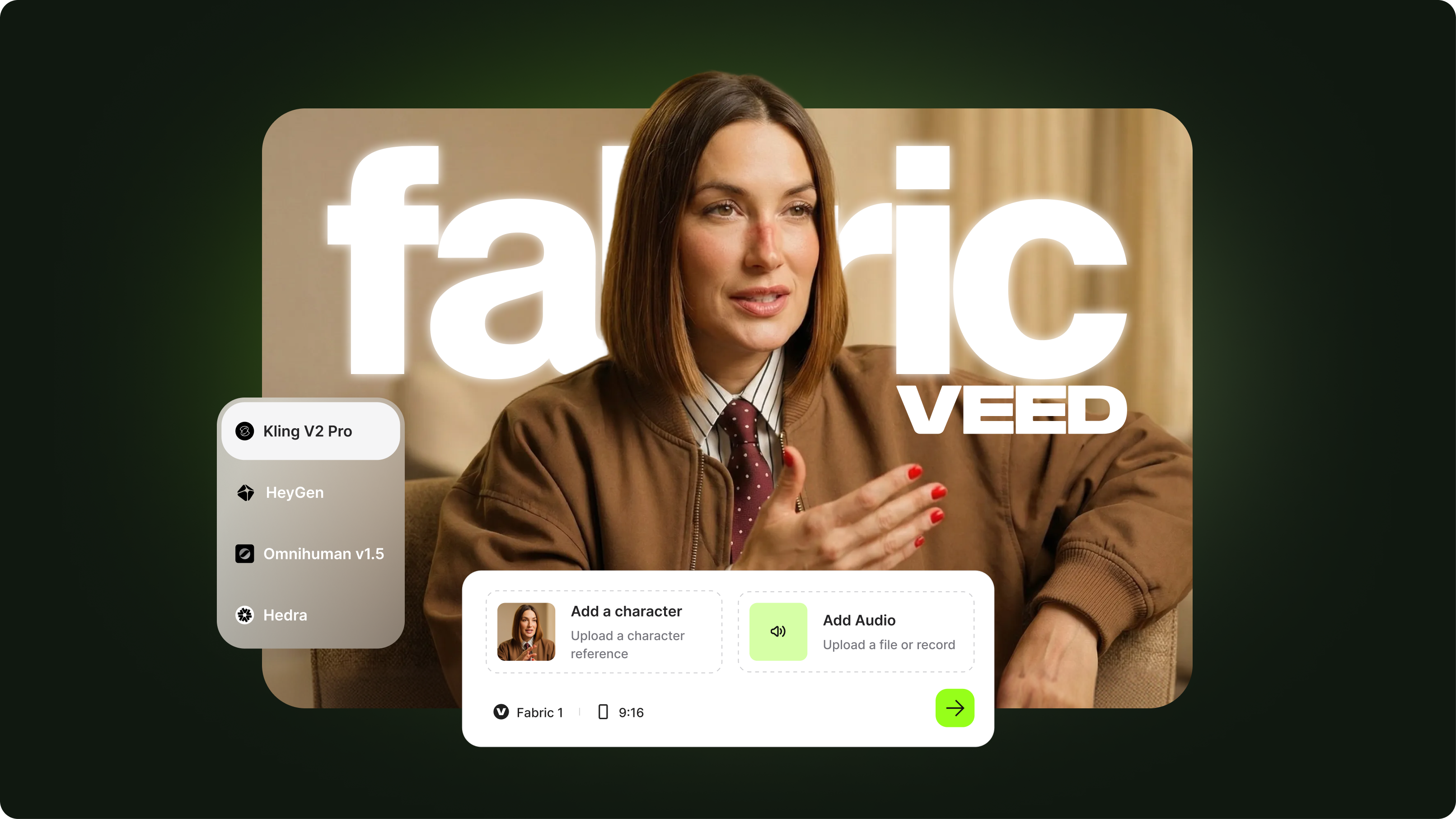

VEED's AI video creation platform is one example of how this integration pattern plays out: AI-powered workflows that need to pull from external context (scripts, assets, briefs) can connect to that context through standardized protocols rather than custom-built pipes.

MCP in code editors: Cursor, Windsurf, and IDE integration

The strongest early adoption of MCP has been in AI-powered code editors. Cursor and Windsurf both support MCP, meaning developers can connect their coding environment to any MCP server and have the AI assistant act on live data, not just the files open in the editor.

A developer using Cursor with a GitHub MCP server can ask their AI assistant to check open pull requests, review a specific diff, or create a new issue, all without leaving the editor. A database MCP server lets the assistant query production schemas to answer questions about data structure. A docs MCP server means the assistant can pull current API documentation rather than relying on training data that may be out of date.

This is why searches for 'cursor ai mcp' and 'windsurf ai mcp' cluster together with 'mcp server setup': developers are not just learning what MCP is; they are trying to get it running in the tools they already use every day.

MCP vs RAG: different tools for grounding AI

RAG (retrieval-augmented generation) and MCP both give AI models access to external information, but they work differently and serve different purposes.

MCP across AI models: not just Claude

MCP was created by Anthropic, but it is an open standard, not a Claude-exclusive feature. OpenAI added MCP support to its Agents SDK in March 2025 and to the Responses API in May 2025. Google DeepMind has implemented MCP support in its Gemini tooling. The result is that MCP servers built for one model provider work across all of them.

This cross-model portability is one of MCP's defining design choices. A company that builds an MCP server for its internal data warehouse can use that same server whether its agents run on Claude, GPT-4o, or an open-source model. There is no lock-in to a specific AI provider at the tool layer.

Major platforms that have added MCP support include Cloudflare (MCP server hosting), Docker (containerized MCP servers), Block, Apollo, Replit, Sourcegraph, and Zed. Enterprise platforms including Salesforce and Azure AI Foundry have also announced MCP integration, indicating adoption beyond the developer community into enterprise software. Enterprise AI workplace assistants like Glean have similarly adopted MCP to connect employees with company knowledge across every tool in their stack. For organizations without in-house AI expertise, working with AI development companies that specialize in MCP implementation can accelerate that transition significantly.

Spring AI and MCP: Java ecosystem adoption

Java developers working with the Spring AI framework can build MCP clients and servers using Spring's familiar dependency injection and configuration patterns. Spring AI's MCP module lets teams expose existing Spring services as MCP tools with minimal additional code, which has driven significant interest in 'spring ai mcp' among enterprise Java shops that want to add AI agent capabilities to existing applications.

A Spring Boot application with a REST service can be wrapped as an MCP server, making that service callable by any MCP-compatible AI agent. This is particularly relevant for enterprises that have years of business logic in Java applications and want to connect AI agents to those systems without rewriting them.

Best MCP servers for building AI agents and apps

The MCP ecosystem has grown rapidly since the standard's release. The following categories cover the highest-utility servers for teams building AI agents:

The best MCP server for a given use case depends on what the agent needs to act on. For coding agents, GitHub plus a filesystem server covers most needs. For enterprise workflows, a CRM MCP server plus a communication tool (Slack or email) covers the core loop.

MCP and AI video creation

Video teams building AI-assisted production workflows can use MCP to connect AI agents to their asset libraries, script repositories, and project management tools. An agent that has access to a brief, a shot list, and a file system can take multi-step actions across those sources, rather than requiring a human to manually pass information from one tool to the next.

VEED's AI video creation platform is built for teams that want AI to handle repetitive video tasks, from captioning to translation to avatar generation. As MCP adoption grows across the creative tool stack, the ability to connect AI agents to video workflows at the protocol level opens up new options for automating content production end to end.

For teams already experimenting with AI agents, exploring VEED's AI video tools alongside an MCP-connected workflow is a practical next step toward fully automated video content.

.png)