You can generate a talking head video in under a minute with most of the APIs on this list. That part is solved. The problem is what comes next.

The generated video still needs subtitles. The background needs cleaning up. The aspect ratio needs adjusting for Instagram versus LinkedIn. The brand colours and logo need dropping in. And now you're in three other tools, spending more time on post-production than you did on generation.

This comparison covers the six leading talking head video APIs in 2026: D-ID, HeyGen, Synthesia, Tavus, open-source alternatives, and VEED, including what each handles beyond the avatar, what it actually costs, and which is the right fit for which use case. No vendor wrote this comparison. We've included VEED's real limitations alongside its strengths.

Key takeaways:

- Talking head video APIs let you generate a speaking avatar from a photo, script, or audio file, without a camera, studio, or actor.

- The main options are D-ID (fast, simple), HeyGen (best realism and language coverage), Synthesia (enterprise training), Tavus (real-time conversational), and open-source alternatives like SadTalker.

- Most APIs stop at generation. VEED is the only option that handles the full pipeline: avatar generation, lip sync, subtitles, background removal, and branding in one workflow.

- D-ID starts at $4.70/mo; HeyGen and Tavus from $29/mo; Synthesia from $89/year; VEED from $12/mo. Open-source options are free but require your own infrastructure.

- Choose based on use case: real-time conversations go to Tavus; avatar realism at scale to HeyGen; quick social clips to D-ID; full post-production pipeline to VEED; self-hosted to SadTalker or Wav2Lip.

.png)

What is a talking head video API?

A talking head video API is a programmatic interface that lets software generate a video of a speaking avatar, from a photo, script, audio file, or text prompt, without any camera, studio, or human presenter.

Developers use them to build at scale: personalised sales outreach videos, localised training content, AI avatar channels, automated video newsletters, and onboarding flows that would be impossibly expensive to film manually.

The term 'talking head' comes from broadcast TV. It describes a shot framed from the shoulders up, focused on a person speaking directly to camera. AI talking head APIs replicate this format synthetically, using generative models to animate faces and sync lip movement to audio.

New to video APIs? See our guide: What is a video API?, which covers the fundamentals before you compare options.

Comparison at a glance

Pricing as of April 2026. Always verify on each provider's official pricing page as plans change frequently.

D-ID: best for quick photo-to-portrait clips

Verdict: The fastest way to turn a headshot into a speaking video. Great for quick social clips. Runs into limits for anything longer or more polished.

D-ID was one of the first consumer-facing talking head tools, and it still does one thing better than almost anyone: you upload a portrait photo, type a script, and a speaking video is ready in under a minute. For LinkedIn hooks, quick product teasers, and social storytelling, that immediacy is genuinely useful.

The limitations become clear at scale. Lip sync quality holds up well under 60 seconds but drifts noticeably on longer clips. The output is portrait-only: no full body, no gestures, no scene changes. And there's no built-in editor, so every clip goes straight to a post-production tool. The D-ID API is available but developer documentation is limited compared to HeyGen or Tavus.

Best for: Social media clips, quick demos, photo-animation use cases.

Not ideal for: Long-form content, multilingual workflows at scale, any use case needing post-production in the same pipeline.

Pricing: Lite from $4.70/mo; Pro $49/mo; Advanced $299/mo (annual billing). Verify at D-ID's pricing page.

HeyGen: best avatar realism and language coverage

Verdict: The most complete studio-style API for scripted avatar video. Best avatar library, best lip sync, 175+ languages. API access is on paid plans only.

HeyGen is the benchmark that other avatar video platforms are measured against. Its avatar library exceeds 1,100 options (including custom avatar creation), lip sync quality is consistently the strongest in testing, and 175+ language support with full lip-synced dubbing is unmatched in this category.

The API is available on paid plans and well-documented, making it a realistic choice for developers building video at scale. Templates, brand kits, and team collaboration are built in. The limitations: it's more expensive than most alternatives at volume, API access is gated behind higher tiers, and like most platforms here, it outputs a video file. Your post-production workflow is still on you.

Best for: Marketing video at scale, multilingual localisation, onboarding content, sales outreach.

Not ideal for: Real-time interaction; teams needing full post-production in a single API.

Pricing: One free credit; paid plans from $29/mo. 4K resolution and API access on higher tiers. Verify at HeyGen's pricing page.

Synthesia: best for enterprise training video

Verdict: Reliable, structured, and compliance-ready. The default choice for corporate L&D. API is in beta and not actively prioritised.

Synthesia's strength is consistency and enterprise-readiness. Its 140+ avatars, 120+ language support, PowerPoint import, and LMS integrations make it the go-to for HR, legal, and compliance training teams who need structured, repeatable video output at predictable cost.

For developers, the picture is more complicated. Synthesia's API is officially in beta and according to publicly available information, is not in active development as a priority. Teams building API-first products should factor in the risk of limited developer support. Real-time interaction is not available.

Best for: Structured enterprise training, compliance videos, multilingual L&D content.

Not ideal for: API-first product builds, real-time use cases, high-volume low-cost workflows.

Pricing: Personal plan from $89/year; Business from $22.50/mo. Verify at Synthesia's pricing page.

Tavus: best for real-time conversational video

Verdict: The only API purpose-built for real-time AI human conversations. Developer-first. Overkill if you only need pre-rendered avatar video.

Tavus operates in a different category from the others. Its Conversational Video Interface (CVI) enables real-time, face-to-face AI interactions: the avatar sees, hears, and responds in natural conversation, with latency under 30ms. For virtual assistants, interactive onboarding, customer support agents, and AI-powered interviews, nothing else in this list comes close.

For pre-rendered talking head video, Tavus also delivers. Its Phoenix-3 model produces high-quality avatar video in 30+ languages, with a developer-first SDK, webhooks, and white-label endpoints. The trade-off is price: Tavus is well-suited for products where high-fidelity interaction justifies the cost, but it's heavier than needed for bulk pre-rendered content.

Best for: Real-time AI humans, interactive avatars, conversational video interfaces, high-fidelity digital twins.

Not ideal for: High-volume pre-rendered video at low cost; full post-production pipeline.

Pricing: Free testing plan; paid from $29/mo; enterprise pricing available. Verify at Tavus's pricing page.

Open-source alternatives: SadTalker, Wav2Lip, and others

Verdict: Real options for developers with infrastructure and technical tolerance. No API cost, but significant setup and maintenance overhead.

A meaningful segment of the developer community evaluating talking head APIs is also evaluating self-hosted open-source alternatives. The most searched are:

- SadTalker: GitHub-hosted model for generating talking head video from a still image and audio. Strong community, actively maintained. Requires GPU infrastructure. Good output quality for portrait-style clips.

- Wav2Lip: Lip sync model that syncs existing video to new audio. Not a full avatar generator, but the go-to for lip sync on real footage. Setup is more involved than SadTalker.

- LivePortrait / MusicTalk: Newer open-source models with improving quality. Community-supported.

The honest calculus: if you have a GPU server, engineering time, and a use case where per-call API cost matters at your volume, open source is worth evaluating. If you need reliability, support, and a production-grade SLA, a paid API is the safer foundation.

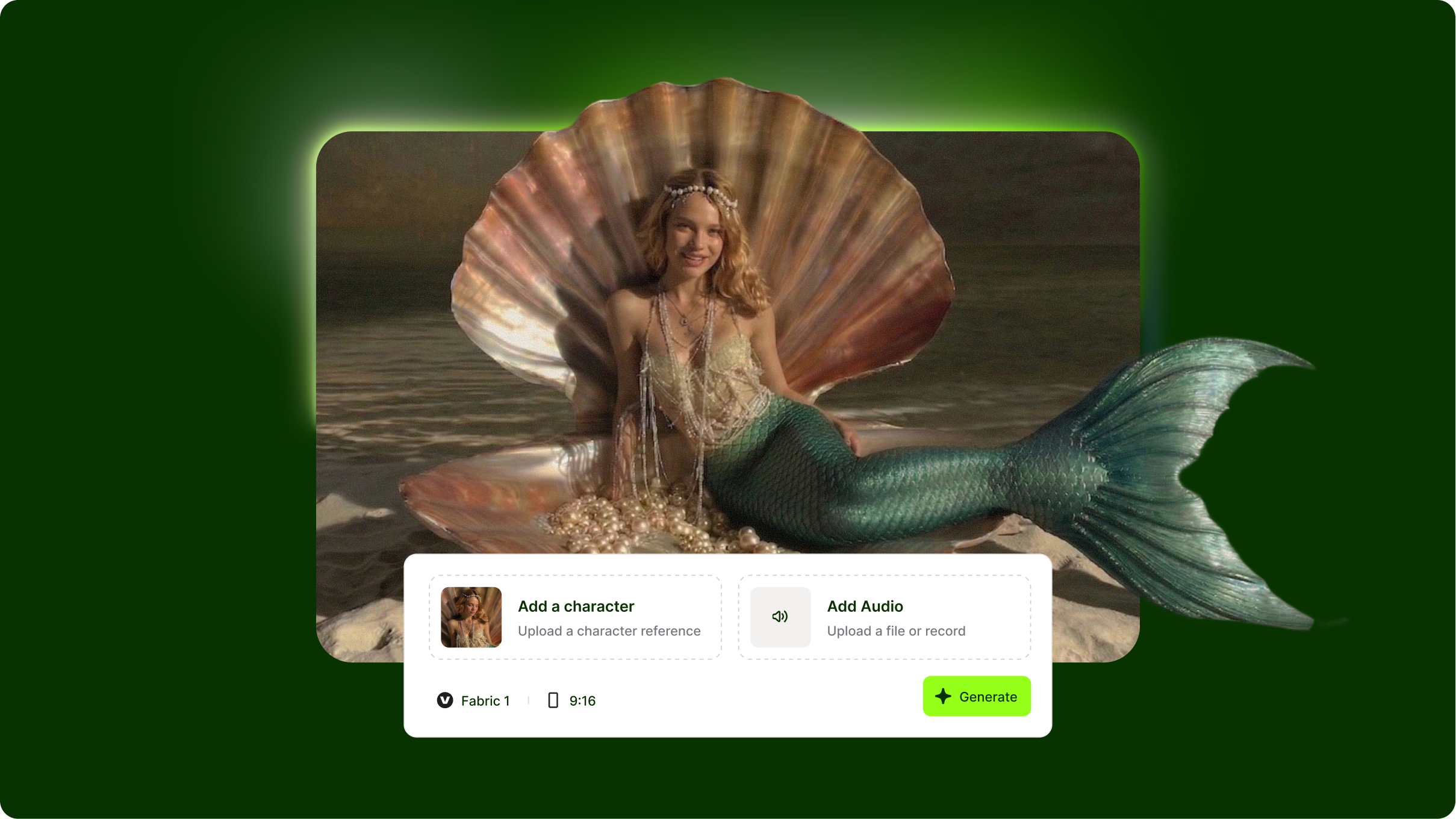

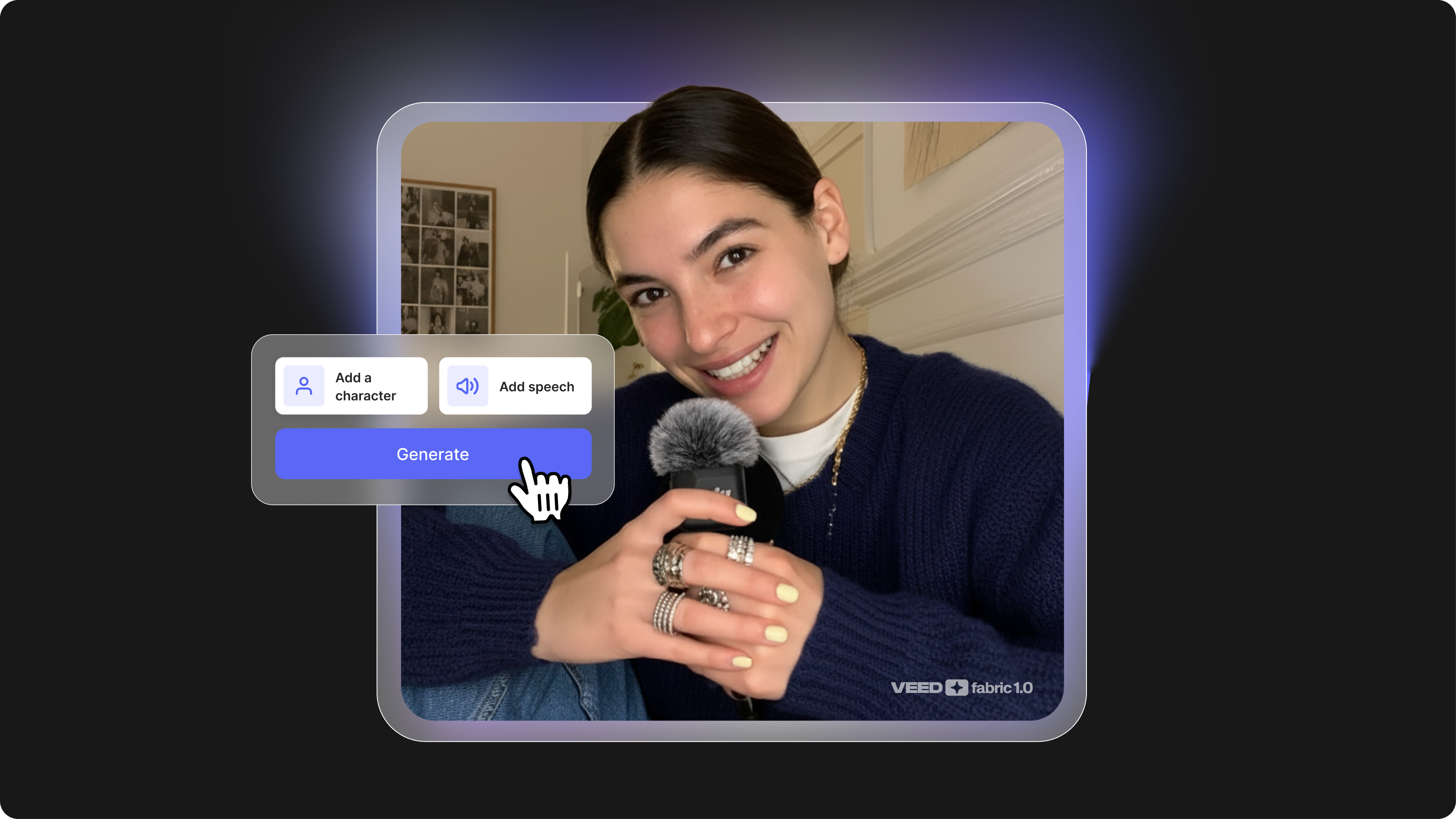

VEED: the full pipeline option

Verdict: The only API that handles what happens after generation. Generate an avatar, add subtitles, remove the background, apply branding, and export, all in one workflow. Smaller avatar library than HeyGen, not real-time.

Every other API on this list gives you a video file and then waves goodbye. VEED's API starts where the others stop.

VEED's differentiator is not that it generates better avatars than HeyGen (it doesn't claim to) or that it handles real-time conversation better than Tavus (it doesn't). The differentiator is that VEED handles the full pipeline, from avatar generation to the moment a video is post-ready, in a single connected API workflow.

What that looks like in practice:

- Fabric 1.0 API: AI video generation from a text prompt

- Lip Sync API: sync lip movement to a new audio track in a different language

- Background Remover API: remove the background and replace it with a branded environment

- Add auto-generated subtitles burned into the video

- Export in the right format and aspect ratio for each social platform

That's five steps that would normally require five different tools. With VEED's API, it's one workflow.

Best for: Content teams and developers who need the full post-production pipeline, not just avatar generation, handled programmatically.

Real limitations: Avatar library is smaller than HeyGen's. Not built for real-time interaction. If your use case is purely avatar realism or live conversation, evaluate HeyGen or Tavus first.

VEED's talking head API:

- Fabric 1.0 API: AI video generation from a text prompt

- Lip Sync API: sync audio to avatar in any language

- Background Remover API: remove or replace backgrounds at scale

- VEED API overview: full documentation and API access

Pricing: From $12/mo. Verify at veed.io/api.

.png)

How to choose: decision framework

Recap and final thoughts

Here's what to remember:

- Generation is step one: every API on this list can produce a talking head video. The real question is what it takes to get from that raw output to a post-ready video.

- D-ID is fastest for simple clips; HeyGen wins on realism and language coverage; Synthesia is the enterprise default; Tavus is the only real-time option.

- Open source is viable if you have engineering bandwidth and want to avoid per-call costs, but it's not a turnkey API solution.

- VEED is the only full-pipeline option: generation, lip sync, background removal, subtitles, and branding in one connected workflow.

- CPC of $90-$200 on this cluster tells you who is searching: developers with budget, close to a decision. The right API choice saves weeks of integration work.

Next step: See what VEED's talking head video API can do for your pipeline: explore VEED's API here.

.png)